Science

AI Revives Celebrities, Sparking Debate Over Likeness Control

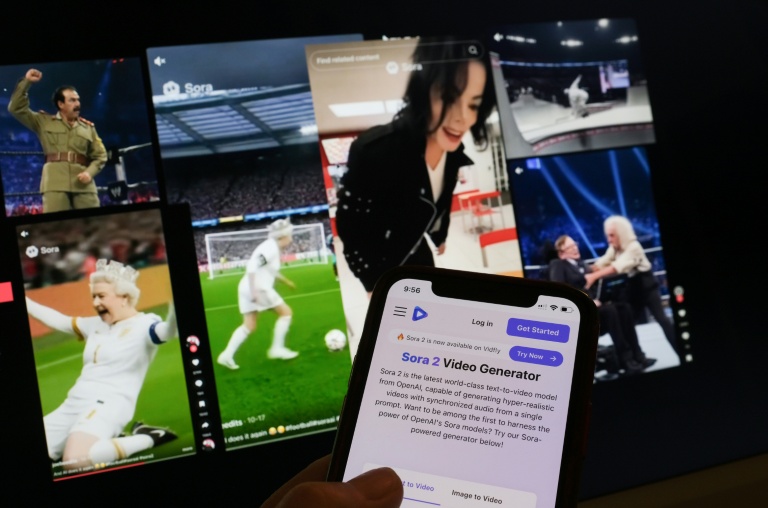

The emergence of hyper-realistic AI videos featuring deceased celebrities has ignited a complex discussion about the ethics of using their likenesses. Applications like OpenAI’s Sora, launched in September 2023, allow users to create videos of historical figures and entertainers, leading to both amusement and concern.

These AI-generated clips showcase notable figures, such as Queen Elizabeth II, who appears riding a scooter at a wrestling match, and Saddam Hussein, depicted in a wrestling ring. However, not all portrayals have been well-received. For instance, OpenAI recently blocked users from creating videos of Martin Luther King Jr. after his estate raised concerns about offensive representations. Some users had manipulated clips to depict King making inappropriate noises during his iconic “I Have a Dream” speech.

Concerns Over Ethics and Emotional Impact

The rapid spread of AI-generated content has raised alarm among families of deceased public figures. Zelda Williams, daughter of the late actor Robin Williams, expressed her dismay on Instagram, asking users to refrain from sending her AI videos of her father, calling it “maddening.” Similarly, the children of comedian George Carlin and activist Malcolm X have voiced their distress over the use of Sora to create synthetic videos of their fathers.

Professor Constance de Saint Laurent from Maynooth University in Ireland warns of the potential emotional trauma these videos can cause, especially if they feature deceased family members. She describes this phenomenon as entering the “uncanny valley,” where the overly human-like interactions with artificial creations become unsettling. “These videos have real consequences,” she stated.

An OpenAI representative acknowledged the importance of free speech in depicting historical figures but emphasized that families should have the final say over how their likenesses are used. For those recently deceased, authorized representatives can now request that their images not be utilized in Sora.

Future Implications and the Spread of Misinformation

Despite OpenAI’s attempts to regulate the content generated by its platform, concerns persist regarding the broader implications of such technology. Hany Farid, co-founder of GetReal Security and a professor at the University of California, Berkeley, criticized OpenAI’s approach, arguing that while the company has taken steps to prevent misuse of Martin Luther King Jr.’s likeness, it has not effectively curtailed the appropriation of other celebrities’ identities. “Even with safeguards, there will always be another AI model that does not enforce these rules,” Farid cautioned.

The risks of synthetic content extend beyond public figures. Researchers warn that the unchecked spread of AI-generated material could lead to widespread mistrust in media. “The issue with misinformation is not necessarily that people believe it; many do not,” said de Saint Laurent. “The real concern is that when people see genuine news, they may lose trust in it due to the prevalence of synthetic content.”

As technology continues to evolve, the conversation about the ethical use of AI in representing deceased individuals is likely to intensify. The challenge lies in balancing creativity and freedom of expression with respect for the legacy and dignity of those who have passed away.

-

Politics5 months ago

Politics5 months agoSecwepemc First Nation Seeks Aboriginal Title Over Kamloops Area

-

Top Stories4 months ago

Top Stories4 months agoFatal Crash on Highway 11 Claims Three Lives, Major Closure Ongoing

-

Lifestyle7 months ago

Lifestyle7 months agoManitoba’s Burger Champion Shines Again Amid Dining Innovations

-

Sports4 months ago

Sports4 months agoCanadian Curler E.J. Harnden Announces Retirement from Competition

-

Top Stories4 months ago

Top Stories4 months agoUrgent Fire Erupts at Salvation Army on Christmas Evening

-

World9 months ago

World9 months agoScientists Unearth Ancient Antarctic Ice to Unlock Climate Secrets

-

World5 months ago

World5 months agoMinister Faces Scrutiny Over Delayed Foreign Interference Watchdog Appointment

-

Entertainment9 months ago

Entertainment9 months agoTrump and McCormick to Announce $70 Billion Energy Investments

-

Lifestyle9 months ago

Lifestyle9 months agoMonika Hibbs Unveils Acres Market & Interiors in Major Rebrand

-

Science9 months ago

Science9 months agoFour Astronauts Return to Earth After International Space Station Mission

-

Lifestyle9 months ago

Lifestyle9 months agoTransLink Launches Food Truck Program to Boost Revenue in Vancouver

-

World2 months ago

World2 months agoRanchman’s Cookhouse & Dancehall to Relocate by Early 2027